Websites, including those of Arizona businesses, are visited by both human users and robots (crawlers) like Google’s. In 1994, the robots.txt system was introduced, allowing website owners to request that crawlers avoid specific parts of their sites. For WordPress users, a default robots.txt file is automatically generated to safeguard sensitive files from being crawled.

Google Search Console flags files blocked by robots.txt in its Page Indexing Report. Arizona business owners can access this by logging into Google Search Console and navigating to the report. Standard WordPress setups rarely encounter issues, but if pages are blocked, the report will display a “Blocked by robots.txt” entry.

The report includes:

- A Graph: Tracks the number of blocked pages over time. For example, a recent spike may indicate an increase in blocked pages.

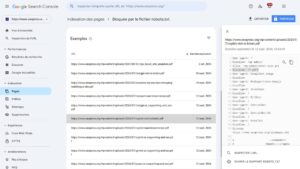

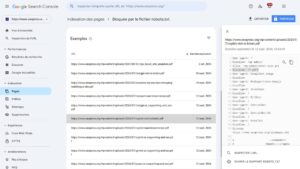

- A List of Blocked Pages: Often includes file types like PDFs. For instance, a site might show all blocked files ending in “.pdf” due to a rule like Disallow: /*.pdf$.

Clicking a blocked page reveals a pop-up displaying the robots.txt file, highlighting the specific code causing the block. For example, at seopress.org, PDFs are intentionally blocked, which aligns with their strategy. However, Arizona businesses may find pages listed that they want Google to index, indicating an error to fix.

Addressing “Indexed, Though Blocked” Issues

The Page Indexing Report may also show pages marked as “Indexed, though blocked by robots.txt.” Google explains that if another site links to a blocked page, it may still index it based on the linking page’s information without crawling the blocked content (https://developers.google.com/search/docs/advanced/robots/robots_txt). Using robots.txt to prevent indexing is unreliable. Instead, to remove a page from Google’s index, Arizona businesses should:

- Remove the robots.txt block.

- Add a noindex robots meta tag to the page.

Fixing Robots.txt Errors in WordPress

Google’s guide, Unblock a page blocked by robots.txt (https://support.google.com/webmasters/answer/10574623), recommends using an external validator to test URLs blocked by robots.txt. Tools like the Robots.txt Validator and Testing Tool from Dentsu can help Arizona businesses identify issues.

To modify the robots.txt file, follow this SEOPress tutorial: How to Set Up and Modify the Robots.txt File. This guide is particularly useful for WordPress users managing their site’s crawlability.

Validating Fixes

After updating the robots.txt file, Arizona businesses can confirm Google is no longer blocked by returning to the Page Indexing Report and clicking the VALIDATE FIX button. Validation may take up to two weeks. While not mandatory, validating fixes provides valuable feedback to enhance SEO performance.

Why This Matters for Arizona Businesses

Proper robots.txt management ensures Google crawls and indexes the right pages, boosting visibility for Arizona businesses. Regularly checking Google Search Console and correcting unintended blocks can improve search rankings and drive more local traffic. For businesses relying on specific file types, like PDFs for menus or brochures, ensuring these are crawlable (if desired) is critical for SEO success.